About Stake Casino Australia Review Methodology

How this Stake Casino Australia review is researched and updated for Australian players, with practical checks for payouts, bonus terms, support, and safety.

- 🔎 Evidence is logged before scoring

- 📘 Uncertain findings are labeled explicitly

- 🧪 Repeat checks beat one-night impressions

📌 Editorial framework and source weighting

🔍 What is measured first

I score operational reliability before anything flashy: payout path clarity, rule readability, support usefulness, and how visible responsible controls are before first deposit. A huge game lobby does not rescue weak process behavior. If a claim looks strong on paper but fails in repeated checkpoints, the claim gets downgraded. That keeps the review practical for players who care about real outcomes, not screenshots.

My source weighting is simple: direct test logs and reproducible interactions carry more weight than generic affiliate summaries. Community complaints and praise are still valuable, but only after they line up with observable mechanics. I also separate platform friction from player mistakes in the notes. That distinction matters because the fix path is different: one needs provider intervention, the other needs better routine discipline.

✅ Why this helps readers

A transparent method lets you audit my reasoning instead of trusting tone. If you disagree with a verdict, you can inspect the exact checkpoint categories and decide where your own priorities differ. That is healthier than treating reviews like final truth. The goal is decision support, not hero storytelling.

📌 Repeat testing before score updates

🔍 Reproducibility rules

One clean session proves almost nothing. I repeat core actions across separate windows: deposit path, bonus activation behavior, withdrawal request formatting, and escalation flow. Each pass is logged with timestamps and expected vs actual outcomes. A smooth run can coexist with a rough run; only repeated direction becomes a trustworthy signal.

I also test under different mental states and tempos because user behavior changes outcomes. Calm planned sessions produce one type of friction map, rushed promo chasing produces another. If a platform collapses only under stress conditions, that still matters. Real players do not operate in perfect lab conditions every night.

✅ Update triggers

Scores are updated when repeated evidence shifts, not when one isolated event goes viral. If new logs contradict old assumptions, the wording is corrected and confidence level adjusted. I would rather publish a conservative update than keep a stale confident claim alive.

📌 Cashier and support verification logic

🔍 What qualifies as a useful support response

Fast greetings are not enough. I mark support quality by first actionable answer, ownership continuity, and whether timeline promises are realistic. Messages that request exact evidence and explain next steps score higher than polite non-answers. This metric is critical during payout or bonus disputes where emotional pressure is high.

On cashier behavior, I verify method visibility, pending-state communication, and escalation readiness. If the process requires detective work, that is a UX debt. If the flow is explicit and reproducible, it earns trust even when queues are not instant.

✅ Practical consequence for readers

You can use these same checks in your own sessions: one structured ticket, one clear chronology, and one case thread. This routine reduces confusion and prevents circular conversations when money is already locked in a pending stage.

📌 Correction policy and publication integrity

🔍 How corrections are handled

When fresh evidence conflicts with earlier conclusions, I update the page and tighten language. The correction process is logged by section so readers can see what changed and why. I do not treat edits as cosmetic; they are part of integrity maintenance.

Uncertainty is communicated directly. If a behavior looks inconsistent, I call it conditional rather than definitive. That might look less dramatic, but it is more useful for risk-aware players planning real deposits and real withdrawals.

✅ Final methodology note

Method recap: evidence first, repetition second, conclusions last. That sequence keeps bias lower and helps readers act with clearer expectations.

🗂️ Extended methodology notes

How I separate signal from noise

I keep two parallel logs during every review cycle. The first is a strict event log with timestamps, values, and state changes. The second is a narrative log where I describe context, assumptions, and emotional pressure points that might bias interpretation. Keeping these layers separate stops me from turning one dramatic moment into a headline conclusion. A quick win during a high-volatility run can feel like platform quality, and one rough pending event can feel like platform failure. Both impressions are incomplete without repeat context.

In practical terms, I assign confidence tiers to each observation. A single run is preliminary. Two aligned runs become directional. Three or more aligned runs under different contexts become actionable. This makes the final page more conservative than typical promo-led reviews, but it protects readers from overfitting to short-term variance.

What gets downgraded immediately

I downgrade any claim that depends on unclear wording, hidden conditions, or inconsistent support explanations. If two agents provide different interpretations for the same condition, I mark the topic as low reliability until documentation and workflow align. The reader should not need to guess what rule applies after activation. Ambiguity is operational risk, and operational risk belongs in the score.

I also downgrade sections where usability depends on luck rather than process. If clean outcomes happen only when every variable goes right by chance, that is not stable quality. Stable quality means the average user can follow a documented path and reach predictable outcomes without heroic effort.

How update cadence is decided

Update cadence is not tied to marketing calendars. I update when evidence quality changes. New payout timing behavior, revised term enforcement, support channel drift, or altered verification friction are all triggers. If none of those move, cosmetic edits are not treated as meaningful updates. The point is to preserve trust in the change log by linking every revision to a concrete behavioral shift.

I annotate update windows with what changed and what remained stable. Readers who revisit the page after a few weeks should immediately understand whether the practical risk map moved or just the wording improved. That transparency keeps the review useful as a tool, not just content.

Field-testing in mixed session conditions

Testing only during calm, planned windows creates a flattering bias. Real players open sessions when tired, distracted, or chasing recovery. So I test under mixed conditions: short controlled runs, bonus-heavy sessions, and one deliberately stressful troubleshooting scenario. The goal is not to glorify stress, but to observe how systems behave when user behavior is imperfect - because that is where most frictions appear.

This mixed-condition approach also helps isolate which issues are platform-side and which are discipline-side. When the same friction appears in calm and stressed runs, it is likely structural. When it appears only after chaotic user behavior, the recommendation changes toward routine design rather than platform blame.

Why this page is intentionally long

Short About pages sound clean but hide method debt. I keep this page detailed because methodology is part of user safety. If readers cannot inspect the logic behind claims, they are forced to trust tone, brand familiarity, or visual polish. That is exactly what high-risk decision environments should avoid.

Length here is not filler. It is an audit trail in plain language. Each block gives enough context for a reader to replicate key checks without specialist tools. Replicability is the strongest anti-bias control available in public review writing.

How uncertainty is written into conclusions

I avoid absolute language unless repeat evidence supports it. Terms like always, instant, guaranteed, or never are usually unhelpful in casino operations where policy, queue load, and account state interact. Instead, I describe likely patterns, known failure modes, and controllable mitigation steps. This gives readers realistic expectations without stripping practical optimism.

When uncertainty remains unresolved, I write it explicitly and explain what new evidence would settle it. This prevents false confidence and gives readers a clear lens for their own live testing.

Reader-first interpretation framework

Every section is written to answer one reader question: what should I do next if this goes right, and what should I do next if it goes wrong? That dual-path framing keeps advice usable during both confidence spikes and frustration spikes. Strategy that works only in one emotional state is incomplete strategy.

I also include concrete fallback moves in most sections: where to pause, what evidence to capture, which escalation pattern to use, and when to exit. These micro-decisions matter more than grand tips when money is moving and emotions are loud.

Final methodology commitment

Method integrity is the real product of this page. If a better process appears, I adopt it and document the change. If evidence contradicts a prior claim, I update fast and visibly. That is the only sustainable way to keep review content trustworthy over time in a fast-changing operational environment.

For readers, the takeaway is straightforward: use this methodology as a reusable template for your own session decisions. Even if your conclusions differ from mine, a structured method will still outperform impulsive interpretation every single week.

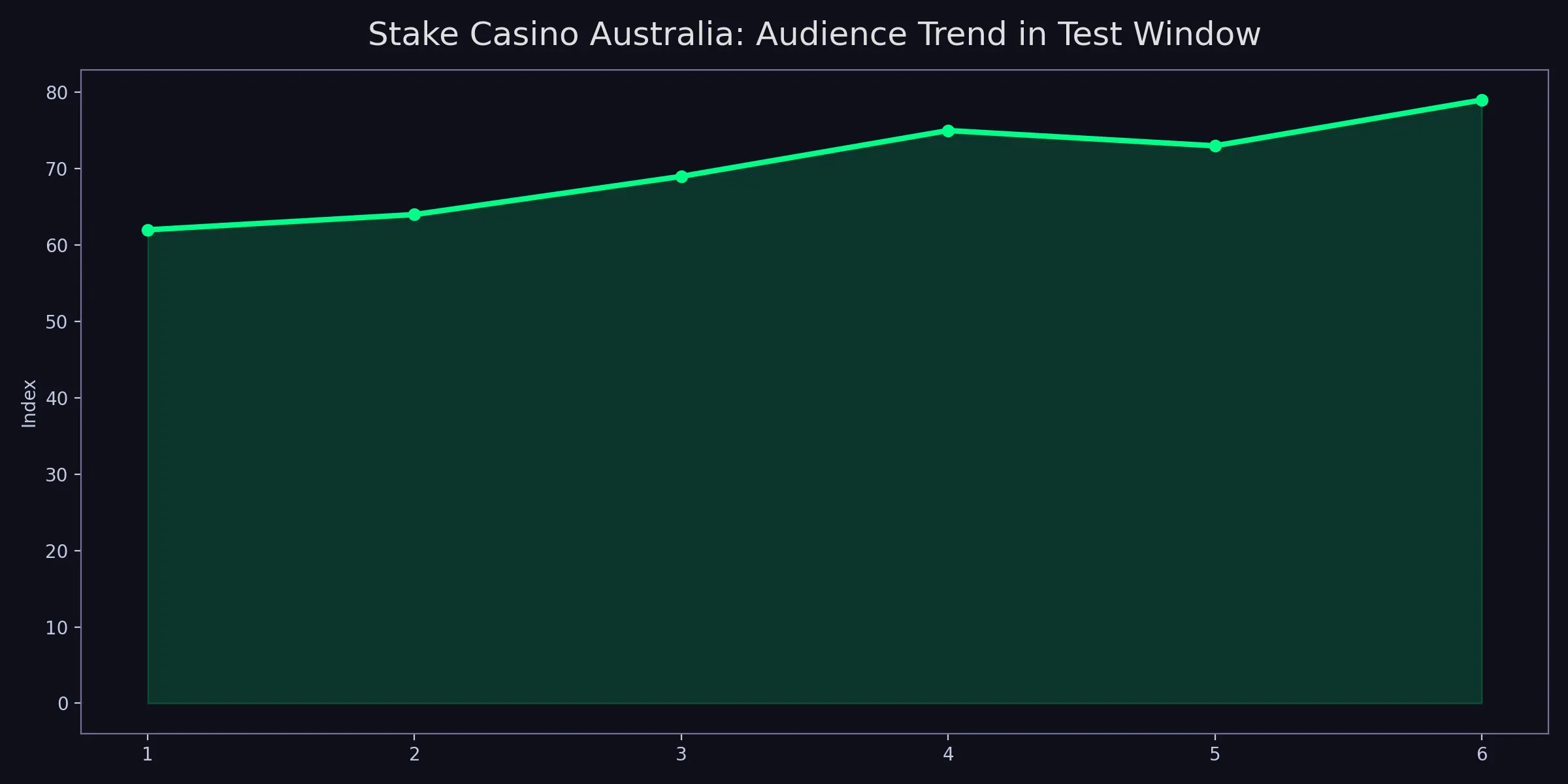

Brand chart for this page

This chart is interpreted as an evidence checkpoint, not as a decorative confidence signal. I map each movement to a documented behavior change, then verify whether that pattern repeats under comparable conditions. If a spike cannot be tied to repeatable inputs, it is treated as an anomaly rather than proof. This keeps the methodology honest when variance is high and narratives are tempting.

The practical reading rule is simple: ask what could falsify the current interpretation. If the answer is unclear, confidence should be reduced. I include this falsification step deliberately because it prevents over-claiming after visually persuasive trends. Strong review discipline is less about bold conclusions and more about controlled uncertainty.

For readers, the useful takeaway is transferable: build your own mini chart logic from session logs. Track a few stable metrics, compare weekly patterns, and update strategy only after repeated evidence. This routine turns isolated outcomes into a decision process you can trust.

This chart reinforces the same rule as the main page: process discipline increases decision clarity. I treat each data point as a prompt to review assumptions, not as a verdict in itself. If a point moves unexpectedly, I check whether the input conditions changed before changing the conclusion. That simple pause prevents narrative drift and keeps the methodology anchored.

Another key use of the chart is calibration. Calibration means matching confidence to evidence strength. If the signal is weak, confidence should be modest. If the signal is repeatedly stable, confidence can rise - but only with clear documentation. This is how a review stays useful under uncertainty instead of becoming overconfident copy.

I also use chart notes to set future test priorities. Sections with unstable behavior get scheduled for earlier retests, while stable sections move to lower-frequency verification. This prioritization keeps workload realistic and ensures that updates focus on practical risk areas first.

For readers, the habit to borrow is straightforward: document, compare, recalibrate. Do this consistently and your own session decisions become less reactive and more deliberate over time.

📚 Long-form methodology appendix

This appendix documents how I keep review quality stable over time. The biggest risk in long-running projects is silent drift: language becomes smoother while evidence standards quietly weaken. To prevent that, I maintain explicit checkpoints for every major claim category and I refuse to publish score changes without traceable test evidence. If a result cannot be mapped to repeat inputs and clear outcomes, it remains provisional.

I also maintain a contradiction log. Every time new behavior conflicts with prior assumptions, I record the contradiction before resolving it. This preserves intellectual honesty and prevents retroactive storytelling. A contradiction is not failure; it is a signal that context changed or previous weighting was wrong. By preserving contradiction history, I can show readers how conclusions evolved instead of presenting false certainty.

Another core element is scenario diversity. Method integrity collapses when tests are run only in ideal conditions. I therefore include mixed scenarios: calm planned sessions, bonus-loaded sessions, and stress-edge troubleshooting windows. Each scenario reveals different weaknesses. A system that looks excellent in one mode can degrade in another. Capturing that variation is essential for practical guidance.

I also document reviewer-side controls. These include pre-test assumptions, confidence tier assignments, and revision thresholds. Without reviewer-side controls, methodology becomes ad hoc and hard to audit. With controls, changes become explainable and reproducible. This is what turns review writing from opinion content into operational analysis.

For readers building their own process, I recommend a compact template: define objective, define inputs, run controlled check, log outcome, assign confidence, and schedule retest. This sequence is lightweight enough for personal use and strong enough to reduce impulsive interpretation. The compounding effect appears quickly: fewer emotional pivots, better escalation quality, and more consistent decision outcomes.

Final appendix note: method transparency is a user protection feature. It helps readers separate proven behavior from persuasive tone and encourages disciplined action in high-variance environments. That is why this page remains intentionally detailed and regularly maintained.